Maria Tzevelekou and Mikhail Tzevelekos – Draco – CodeX Group Meeting March 5, 2026

Maria and Mikhail Tzevelekou, co-founders of Draco, join CodeX to discuss how they’re using AI to tackle one of Europe’s most digitally lagging legal systems. Greece ranks last in the EU for legal sector digitalization — with money laundering cases taking an average of 20 years to resolve and over 100,000 legal documents still undigitized.

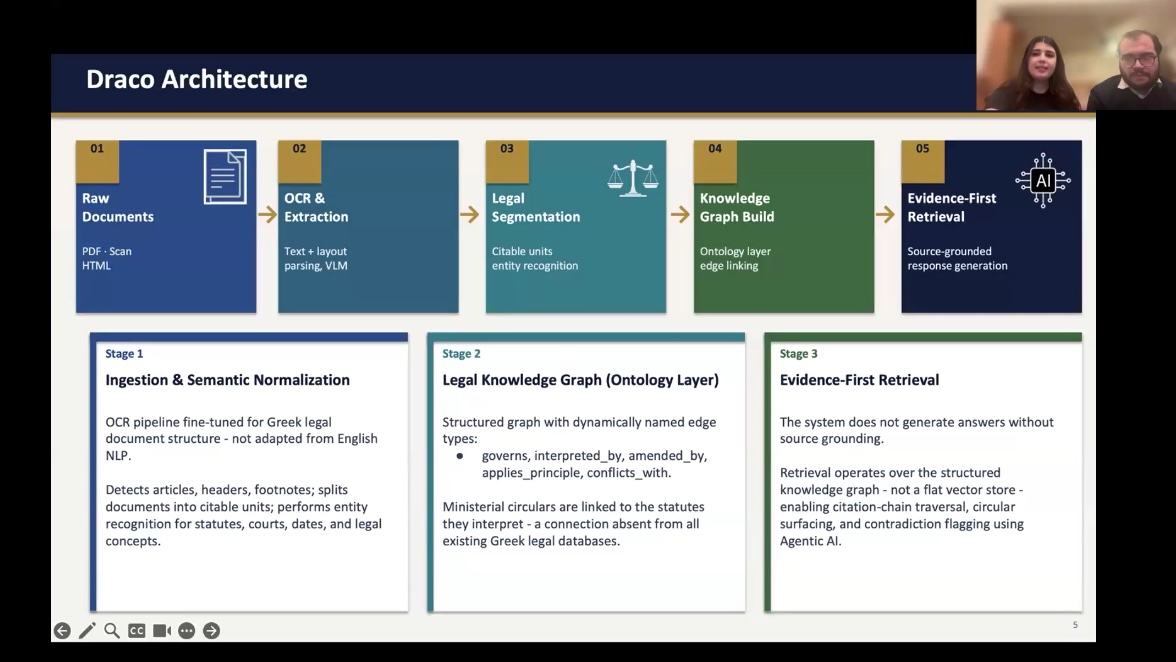

In this session, the sibling duo (a lawyer and an engineer) walk through Draco’s architecture: a specialized ontology-augmented retrieval system built for the unique challenges of the Greek language and legal domain. They demo four core modules — Legal Advisory, Document Drafting, Jurisprudence Research, and Case Intelligence — and discuss their path from a judiciary-focused tool to a full practitioner platform.

They also cover data sourcing, liability considerations, differentiation from existing Greek legal tech, and their roadmap for expanding into other civil law jurisdictions across Europe and Latin America.

Watch CodeX Group Meeting in Youtube

Transcript

Roland Vogl: Welcome, everyone. Let’s get started. Welcome to a Codex group meeting. Today we have two presentations. First, we have Maria Tzevelekou and Mikhail Tzevelekos who are the co-founders of Draco. They are siblings, right? Brother and sister?

Mikhail: Yes, that’s correct.

Roland Vogl: One of you — Mikhail — is a lawyer, and Maria is a technologist.

Mikhail: That’s right.

Roland Vogl: Legal innovation runs in the family. We’ll hear from you about the AI system you’ve built for the Greek judicial system. Over to you, Maria and Mikhail.

Maria: I’m Maria, and this is Mikhail.

Mikhail: I practice law, and Maria is an engineer. She’s currently a student at the Electrical and Computer Engineering School at the National Technical University of Athens. Together we’re building a program called Draco, which aims to accelerate Greek legal justice using AI and modern tools.

The premise for building this came from firsthand experience. I came up with the idea initially and brought it to Maria while I was working as a law clerk during law school. At the time, I was doing a lot of clerical and repetitive work — very little actual law. Most of my days were spent sifting through disorganized data and trying to make sense of it so we could build arguments. That was also around the time large language models had just begun to take off, and I started experimenting with them to see how I could make the clerical aspects of my workload faster, so I could actually practice law.

Maria: I also examined the problem more broadly across Greece. What we’re going to show you is what this problem looks like from a firsthand perspective.

The core problem is that millions of legal documents are computationally invisible. The pictures on your screen are from the Athens District Court — case files, judicial decisions, everything stacked in towering piles of paper. Greece ranks lowest in Europe for digitalization in the legal sector, according to EU statistics from 2024. Specifically, money laundering cases were completed after an average of 20 years — 5.5 years, compared to an average of roughly 2.5 to 3 years in other European countries.

To put it in perspective: a judge will take one of those large case files home, physically read through all the filings and evidence, type out the relevant data, and then attempt to render a judgment.

Mikhail: The Greek legal problem breaks down into three aspects. First, there are seven or more disconnected source systems per query — legal materials spanning both online and physical archives, from Supreme Court archives and the Council of State to administrative courts, private databases, and physical-only archives, with no unified query interface. Second, 90% of working time is spent on information retrieval — based on interviews we conducted with Supreme Court judges, administrative court judges, and lawyers at major and higher law firms — largely because most documents exist as scanned PDFs, unstructured text, or handwritten text. Third, more than 100,000 primary legal documents remain undigitized, and Greek legal language carries additional complexity, retaining polytonic influences that make modern OCR systems far more error-prone than their English-language counterparts.

Maria: To tackle this problem, we built a specific architecture for the Greek domain. We ingest raw documents — PDFs, scans, or typed text — then apply OCR and extraction using language models fine-tuned for the Greek language. That fine-tuning capability became available roughly three months ago. We then segment that knowledge into structured forms, creating a knowledge graph that our agent system can work with. Finally, we have an evidence-first retrieval system designed to reduce hallucinations and generate accurate responses. It’s closer to an ontology-ranked system than a traditional RAG or graph system.

Our key insight is that standard RAG cannot handle these large documents at the accuracy we need, especially for Greek. In English, one token roughly corresponds to one word. In Greek, one word can occupy two or three tokens. Given a fixed context window, it becomes much harder for the system to understand what’s happening — especially when the model hasn’t been trained on large Greek datasets. We drew on the “Lost in the Middle” paper, which speaks to accuracy degradation across long contexts. Rather than relying purely on text similarity, we built an evidence-first ontology-relationship retrieval system, which more closely mirrors how an actual lawyer processes large documents — focusing on relationships between concepts rather than raw text.

Mikhail: Here’s our first document example. This is heavily redacted, drawn from a real case. What you’re looking at is a handwritten doctor’s opinion that is part of a case before the Court of Cassation. Documents like this — among others — are what users upload to the platform to begin their analysis. Even for someone who reads Greek fluently, this document is extremely difficult to parse.

Maria: Here’s a quick overview of the platform. Users log in to Draco, which is typically deployed on-premises for data protection reasons — in Europe, GDPR compliance is non-negotiable. There are several suites available.

The Legal Advisory suite allows users to work within a specific database — either one they’ve built or one we’ve built with them — and retrieve knowledge exclusively from that database to avoid hallucinations.

The Legal Drafting platform is closer to the general-purpose tools available on the market. Users can specify facts from a case and draft documents, drawing on the retrieval methods described above.

The Jurisprudence Suite connects to an external database of the user’s choosing and searches it using the same methods.

The Case Intelligence module is more of a graph-based tool, which we’ll show further.

Mikhail: Users begin by creating a new case file, uploading all of their unstructured documents, naming the case, and classifying it however they choose. This example involves a request for a stay of an administrative penalty before the Court of Cassation. The user has uploaded a large main document — a scanned copy of the motion — along with supporting documents.

The first step is handled by a proprietary agent that extracts the ontology layer from the uploaded data. This serves two purposes: it enables the ontology-augmented retrieval for subsequent queries, and it gives users a visual summary of key elements they may have overlooked. This layer is dynamic — an agent rebuilds it for each case.

Maria: The Legal Advisory suite functions much like a case-specific assistant, grounded entirely in the documents the user has uploaded. Every answer cites a specific passage from those documents. We’ve also built in a refusal mechanism: if a question cannot be answered based on the uploaded facts, the agent will say so explicitly rather than fabricating a response.

We’re translating some of the query examples into English here so you can follow along. In this example, the user asks a specific question and the system surfaces all relevant arguments present in the case, with citations. Certain portions are redacted, but you get the idea.

Maria: In the Document Drafting suite, users can request drafts of any document type. What we’ve done differently here addresses a common complaint from colleagues and judges: whenever they use AI platforms to draft something in Greek, the phrasing sounds awkward. We believe this is because these models are trained predominantly in English and attempt to reverse-engineer Greek, producing unsatisfying results.

Our solution is to allow users to upload documents they’ve previously written themselves. The model can then adapt to that user’s preferred style. We also intentionally limit this to the user’s best drafts — most colleagues told us they want the system to emulate their finest writing, not their rough work.

Mikhail: The styling function analyzes all documents the user provides and extracts a linguistic profile across vocabulary, sentence structure, and argumentation style. Users can also add comments specifying what to include or exclude, making it more accurate to their particular style. We’ve found that law firms often have a house style for specific document types, and that can vary significantly from firm to firm — so capturing that granularity was important.

Maria: The Research Suite allows users to research specific legal topics within their chosen legal database. A secondary agent can then follow up with deeper questions and explore related topics. Every answer includes citations to key statutes so users can read further independently, download them, or continue their research with our assistant.

Mikhail: Our core question is this: how can we use AI to improve the quality of justice in society? And as AI advances so rapidly, how can we implement it thoughtfully — and help others do the same — in fields like law that remain deeply paper-bound and wedded to traditional methods?

Thank you for having us.

Roland Vogl: Thank you both. A few questions from the chat. First — I thought initially you were working directly with the judiciary to help digitize their inventory, but it seems the tool suite is more focused on practitioners. Is that right? More like Harvey or Lex Machina, but specialized for the Greek market?

Mikhail: That’s an interesting point. We started by building a system exclusively for judges and began early discussions about deploying it in a prosecutor’s office. But when we got into Columbia University’s AI Lab accelerator and started speaking with people there, the demand from practitioners was so overwhelming that we had to address it.

Maria: Exactly — we had to meet that demand.

Roland Vogl: You mentioned ontology-augmented retrieval several times. Benjamin asks whether you’re using the community summaries method from Microsoft Graph RAG. Did you use cross-entropy loss when converting to and from the graph representation? I’ll also share some open-source work you’re welcome to look at.

For those less familiar: Microsoft Graph RAG takes documents, converts them into entity triplets, builds those into graph communities, summarizes the communities, vectorizes those summaries, and uses vector search to find the entry point into the graph. A somewhat different approach is to use the graph for actual reasoning — starting with sentences, encoding them into a graph representation, decoding back to language, taking the cosine similarity of the two for a cosine loss, and iteratively improving the encoder and decoder. You can add a second loss measuring how well the knowledge graph fits into a global graph across the entire corpus — not just one document — and use that to distill an ontology as an intermediate representation, analogous to assembly language sitting between high-level code and binary. I wasn’t sure how you were approaching this — obviously it’s proprietary — but feel free to look at what I’ve been working on.

Maria: That’s incredibly interesting, especially the cross-entropy loss. At present, we’re largely working within the available open-source tooling. One limitation of Microsoft’s Ontology RAG is that it requires specific ontologies to be set upfront, which are relatively fixed. Because our use cases are dynamic and vary from case to case, we’ve been building additional layers to accommodate that. But what you’re describing is genuinely interesting, and I’ll definitely look into it.

Roland Vogl: A few more questions. First, where are you sourcing your data from?

Mikhail: The Supreme Court’s website is completely open to the public and uses an enhanced Google search interface, making it straightforward to download judgments — we pulled everything from 2009 to 2026, and it’s entirely free. The limitation is that we’ve only trained on Supreme Court judgments so far, not lower court judgments. Addressing that will require access to government systems where those judgments are stored. For the practitioner-facing product, since we deploy on-premises, we can train on judgments clients have acquired through their practice, in addition to Supreme Court decisions. We haven’t yet partnered with the government on that side, but it’s something we’re looking at.

Maria: We’re also in conversations with other companies that already have datasets, exploring how we can integrate what we have with what they’ve built.

Roland Vogl: Several more questions: How do you handle complexity in multi-party, multi-document cases with varied formats? How do you differentiate from existing Greek players like Coyote AI or Deeplaw.io? How do you think about liability if, despite all your safeguards, the system still produces a wrong output? What about a data flywheel — can one user’s interactions improve outputs for others? And given Greece’s relatively small market, how do you think about building a sustainable business? Is this your beachhead into broader Europe?

Mikhail: On multi-document complexity — we handle the token limit issue using multiple agents working in parallel. We’d rather not go into full detail, but we’ve tested the system at the hundreds-of-documents scale and have been able to maintain the accuracy we need.

On differentiation from existing Greek tools — our assessment is that those products are largely a ChatGPT API with some Greek legal data layered on top. They cater more to a general audience than to legal professionals. That’s the consistent feedback we’ve heard through interviews with judges, prosecutors, attorneys, and law students.

Maria: On liability — we’re currently collaborating with the National School of Judges to build an educational component into the platform. We want to ensure users understand how to use the tool correctly and where its limitations lie.

Mikhail: On market size — you’re right that this problem isn’t unique to Greece. It exists across most civil law jurisdictions: Germany, Switzerland, Turkey, Romania, Bulgaria — and the Latin American market is very large with the same underlying issues. We haven’t explored that yet, but preliminary research suggests the same problem exists there. This started as a research paper and grew into a business, so the potential to scale is real, even if we’re starting here.

Roland Vogl: That’s fascinating. You’re essentially developing an approach that can be transplanted into other legal systems with similar challenges. There are some interesting cross-border opportunities within Europe — certain companies have built around EU-level law that’s shared across member states, and that allows for broader reach more easily. But legal tech is often quite jurisdiction-specific, so the approach you’re taking of building deep domain expertise first seems smart.

Thank you both so much for staying up — it’s midnight in Athens, and we genuinely appreciate it. For anyone who wants to meet Maria and Mikhail in person, they’re planning to be at a legal conference here in April. Please keep us posted on Draco — it’s a really exciting project.