Your Privacy At Risk

Phone-hacking scandals at News of The World. One lawsuit after another alleging privacy breaches by major companies. A backlash over body-scanning machines in airport security lines. It’s been a busy year for those who work at the intersection of privacy law and technology.

“2011 is the year that changed privacy,” says Ryan Calo, who directs privacy and robotics at Stanford Law’s Center for Internet and Society. “The levee broke and there’s been a critical mass of sustained attention that you hadn’t seen before. I think it’s changed things, probably for the better.”

The Internet Age has ushered in a wave of new challenges for technology companies , lawyers, judges, and lawmakers—problems unique to an era when previously unimaginable quantities of personal data are created, collected, shared, and sold every day on the Web. And the development of law to address issues arising from this new information age is lagging.

“Once upon a time most of what we did was not mediated by technology—we bought goods or looked up references in person,” says Calo. “Now we do it through websites.” For instance, you could buy a book with cash and burn it, and nobody would ever know you’d read it. But a book you download to your Kindle or order online will be associated with you permanently. It’s trivially easy for computers to associate different databases, including some supposedly anonymous ones, to gain a more complete profile of individual habits.

“We are dealing in a completely new commodity that we’ve never previously used as a basic commodity,” says Fred H. Cate, JD ’87 (BA ’84), who teaches privacy law at Indiana University. He fears that it’s “wishful thinking” to believe we’ve learned much from the privacy scandals and revelations of the past year. “We’re up against two very big challenges: a post-September 11, 2001, world where the government wants everything, even things it doesn’t know why it wants, and a new world of social networking and Internet marketing where every action is captured and tracked,” he says. “What’s interesting is that people have not shown much concern about either.”

Marc Rotenberg, JD ’87, founded and runs the Electronic Privacy Information Center (EPIC), a leading nonprofit devoted to lobbying and litigating on privacy-related issues. Rotenberg thinks the past year may mark the beginning of a “return to normalcy” where people become more assertive about their privacy rights—in contrast with a decade in which “we’ve been operating in the shadow of 9/11.” EPIC briefed five cases in front of the Supreme Court last term and will brief at least another three cases this coming term, including a much-watched case in which the Court will determine whether police can put secret GPS trackers on suspects’ cars without a warrant.

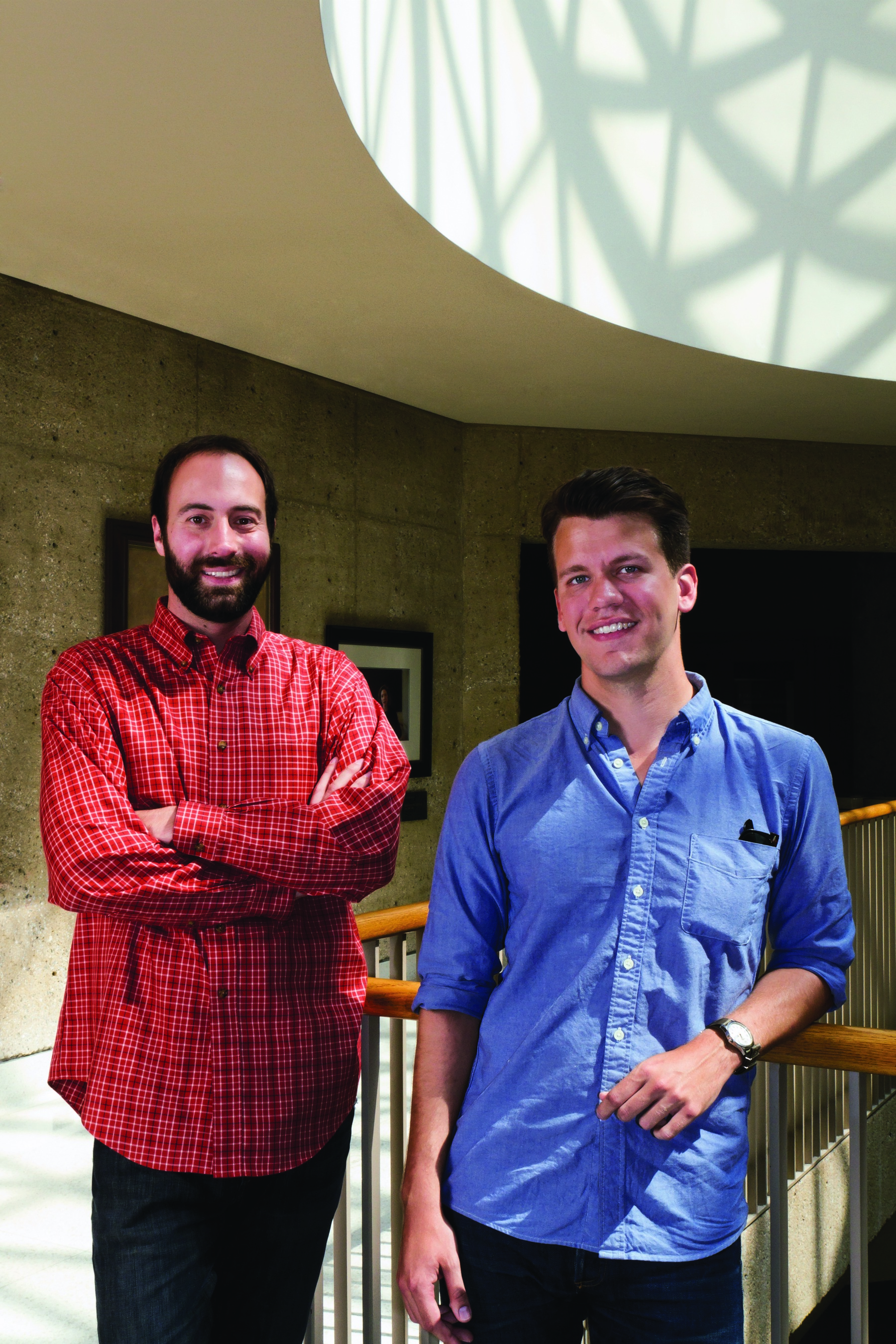

One reason people might be more assertive about privacy rights: a series of revelations about abuses by online marketers, which Stanford Law researchers helped expose. Jonathan Mayer, JD ’12, who is also pursuing a PhD in computer science, has received attention from national newspapers and congressional leaders for helping uncover the practices of behavioral advertisers—online marketing firms that track individual preferences, including Web-browsing history, in order to display more relevant ads. These companies have assembled deeper, more complete profiles of almost every Internet user than many are aware.

“History stealing” is among the most troubling practices Mayer helped uncover. A major advertising network was surreptitiously snooping into whether visitors to its sites had previously visited any of tens of thousands different, unaffiliated websites. These included websites that advertisers might use to draw or confirm inferences about a visitor’s sensitive financial details, like whether the visitor owed back taxes to the Internal Revenue Service or had looked at Federal Trade Commission resources concerning debt collection. The company reportedly stopped the practice, in response to Mayer’s findings.

Even as high-profile abuses draw privacy issues into the spotlight, Stanford researchers struggle with the question of whether regulating Internet privacy will stifle innovation. The past year saw several major congressional actions that got unique traction, most prominently a proposed bill by Senators Kerry and McCain to regulate the commercial collection of individual data.

“One of the lessons of Internet law is that if you regulate too early or in too technology-specific a manner, your regulations quickly become useless or subject to evasion,” says Mark A. Lemley (BA ’88), William H. Neukom Professor of Law. He cites e-commerce legislation in the 1990s, which was premised on the faulty notion that no consumers would ever really buy things online, and the Digital Millennium Copyright Act, which was enacted just a year before peer-to-peer file sharing was introduced and proved utterly ineffective in protecting copyrighted works shared over such services. Lemley worries that privacy scares might lead Congress to overreach and act too quickly, stifling innovation and depriving the market of the chance to strike a proper balance between innovation and privacy.

Rotenberg takes a different position, arguing that free- market solutions may be doomed to fail because companies have little economic incentive to protect privacy, but plenty of financial motive to collect data. “Self-regulation has failed to provide any meaningful privacy protection,” he says, pointing out that companies often abandon self-imposed promises when they become inconvenient. Roland Vogl, JSM ’00, a lecturer at Stanford Law and the executive director of the Stanford Program in Law, Science, & Technology, agrees. “It’s a mystery to me when companies like Facebook profess a commitment to privacy, when their business is based on getting info about their users,” he says. “I’m very skeptical about that.”

At the same time, even well-balanced legislation can produce unintended consequences. “The most productive uses of data might be outlawed to us, while the most destructive uses of data will be widespread,” says Cate. Much of Cate’s work concerns the privacy of medical data—which he says is often poorly protected against the most abusive practices by bad actors—while well protected against the most desirable uses, such as how and whether to use anonymized medical history data to study the effectiveness of various treatments.

In this uncertain legislative environment, litigators have been battling about how well old laws fit new problems. Defending technology companies against privacy-related suits has become a major part of Mali B. Friedman’s practice at Covington & Burling LLP. Friedman, JD ’06, says the firm has seen a big uptick in suits following each privacy-related revelation in the media. “It’s a little like ‘whack-a-mole’ each time a new complaint pops up in needing to explain why plaintiffs’ theories simply don’t apply to the modern technologies at issue,” she says. “Everyone agrees that some key privacy statutes need to be amended, but it’s really hard to stay ahead of technology and anticipate what the next thing will be.” Robert L. Rabin, A. Calder Mackay Professor of Law, says that the old “privacy torts at common law promise more than they deliver.” One of the most relevant causes of action in an era of social networking and gossip websites—a tort claim that allows recovery for the public disclosure of private facts—was first proposed in the early 20th century in a law review article by Samuel Warren and Louis Brandeis. “The tort itself has never been as fulsome as one might have expected a hundred years ago,” says Rabin. In particular, the whole notion of massive-scale data collection by marketers is so new that the common law tort regime is poorly prepared to deal with it. “It just wasn’t the kind of issue that arose earlier,” he says.

Perhaps unsurprisingly for a chief judge of the U.S. District Court for Northern California, W. James Ware, JD ’72, has overseen a significant number of the cases that Friedman and others have been involved in. While he won’t comment on specific cases he has presided over—including the prominent settlement involving “Google Buzz” social networking service—he says that much of the litigation he sees these days concerns “the risk that information won’t be properly used or targeted” and “whether or not it’s appropriate [for websites] to realize a commercial advantage from the utility of the service they provide to consumers.”

Judge Ware says he and other judges meet frequently to discuss how to understand and deal with the types of problems posed by cases involving new technologies. “Whether we’re learning fast enough to keep up with technology is another question.” He has even faced some social networking and privacy issues in his own courtroom. It’s increasingly hard, he says, to get juries not to discuss a pending case outside the courtroom in an era when many jurors are tweeting, Facebooking, and otherwise sharing every moment of their lives.

As Congress and the courts figure out whether and how to act in response to new types of privacy violations, some researchers have been exploring hybrid solutions that combine technological know-how with policy savvy. “Do Not Track” has emerged as a prominent compromise proposal: It would allow users to broadcast their desire to opt out of tracking by third parties, including behavioral advertising and marketing firms, using a browser setting or add on. Mayer, along with a colleague in the computer science department, has been one of the leading voices for Do Not Track—the same basic standard he has proposed for opting out has been adopted by three out of the four leading Web browsers.

Mayer and his colleagues have more work cut out for them, though. For they need buy-in from the advertisers, who would have to voluntarily agree to respect users’ opt-out preferences, or new legislation that would require these firms to respect them. In advocating for better policies, Mayer says, his legal training has made “all the difference.” He recalls giving a talk to a computer science audience in which he discussed complicated judgment calls that would have to be made—and many of the computer scientists in the room were surprised and frustrated with the lack of a clear black-and-white line. “I felt comfortable saying that in some areas, these lines are a policy question. Computer science doesn’t have a great answer, and it’s going to be a judgment call,” he says. “Policy people are good at big policy questions and big trade-off calls. Technology guys are experts in implementing user choice.”

Barbara van Schewick, associate professor of law and faculty director of the Center for Internet and Society, says that Mayer epitomizes the advantage Stanford has in privacy-related research: its combination of some of the best technological minds with some of the best legal minds. “Approaches that try to solve the problem either purely through law or purely through technology probably are bound to fail,” she says. “You really need a coordinated approach where you put pressure on websites through law and combine that with technology. Jonathan’s work is the connection of sophisticated computer science with legal analysis and legal strategies to help create incentives.”

Mayer hopes that Do Not Track will represent a “toehold”—it will show businesses that giving consumers a choice won’t break their business models entirely.

In the meantime, he’s hard at work tracking the trackers—his research has produced some stunning revelations about the practices used by Internet marketers. Just this summer, he and his colleagues launched a program called “FourthParty” that helps researchers track what these websites are secretly monitoring. Calo describes this as “really empowering technology” and “something no one else is doing.” Mayer says monitoring is critical to enforcement: FourthParty has already helped discover some serious breaches where marketing companies claimed users could opt out but in practice were secretly failing to respect that choice. “Self-regulatory programs have been very deceptive,” he says.

David Thompson, JD ’07, has been another innovator in non-legislative solutions to some of the biggest privacy problems. “The government has been slow to respond, so private industry had to jump in,” he says. A few years back, Thompson helped launch Reputation.com (along with fellow Stanford Law graduate Ross Chanin, JD ’09), a service that helps individuals remove or clarify misleading information that’s posted about them online. (Thompson has also co-written a book, Wild West 2.0, on the problem.) “Google and these data sources were threatening to turn the world into a small town and not in a good way,” he says of Reputation.com’s founding. “This data keeps getting pulled out of context and used in ways people didn’t intend.”

Colette Vogele, a fellow at the Center for Internet and Society and an attorney at Microsoft, founded a nonprofit called “Without My Consent,” similarly designed to help consumers fight back. Without My Consent specializes in providing information for victims of online harassment and abuse.

One of Vogele’s first clients was a woman whose ex-spouse posted a private, intimate video online. “It was pretty shocking,” she says. But the woman was concerned that litigation would only bring more unwanted attention. As a result, Vogele has had to turn herself into an expert at filing suits anonymously. Without My Consent provides information to help victims and their lawyers do so.

Vogele fears that privacy violations on the Internet have far worse consequences than those that privacy tort law was originally designed to combat. “In the past you couldn’t reach an audience with the click of a mouse,” she says. “Now the damage from exposure of a private thing is effectively worldwide. Even if you win your suit, there’s no real ‘unringing’ the bell. Your ability to start fresh is so much more limited.”

Thompson says he’s worried about what he calls the “Facebook over-sharing generation,” which is only beginning to understand the consequences of sharing things online, things that may seem funny at the time but disastrous in a few years—and that may be impossible to ever really take back. Lemley thinks the problem stems partly from technology undermining our traditional notions of privacy. It used to be that there were essentially two categories: public activities that one did out in public streets and forums and private activities that one did in a home with a few friends or family. “Now there’s an intermediate category: what I share with 1,500 friends on Facebook, which is neither private nor public in the traditional sense,” says Lemley.

Another major avenue of research involves making sure consumers at least know what’s happening to their personal data, even if they can’t control it. Researchers have long questioned the effectiveness of the ubiquitous “privacy policies” that appear on websites: long, jargon-infused descriptions of companies’ data collection practices that few consumers pay attention to.

“Notice and choice worked well in a world with a few discrete transactions,” says Cate. “They don’t work in a world where we have millions of transactions a day. Our fundamental premise of privacy rights has proved completely unworkable in the modern world.” He points out that even if consumers are informed of how their data is being used, they rarely have much choice in how to respond. “If I don’t agree [to Apple’s privacy terms], my iPhone becomes a brick.”

Much of Calo’s work has drawn on communications research to see whether other forms of notice strategies might be effective. “Privacy disclosure has been the whole ball game, and it doesn’t work at all because people don’t read privacy policies,” says Calo. But unlike Cate, he hasn’t given up on the basic idea. He has recently written a law review article called “Against Notice Skepticism,” proposing that before we give up on notice, we need to try new ways of communicating data-collection practices to consumers that leverage the very design of websites.

“Before we move to default rules or substantive proposals, we need to rule out the possibility that we’ve used the wrong information disclosure strategy,” Calo says. “We know we can convey information in some non-verbal ways that might be successful where traditional, textual notice has failed.”

Along these lines, Calo and Lauren Gelman, former executive director of the Center for Internet and Society (CIS), spearheaded a CIS project called “WhatApp,” which takes a new approach to notice—instead of having Web applications disclose their own privacy practices, WhatApp solicits anyone to contribute his or her own views on websites’ practices, rating them on a sliding scale. Facebook, for instance, currently scores two out of five on the site’s metrics: privacy, security, and openness.

Another notice-related project Calo and CIS have under way, called “Privicons” (see page 29 for a piece by Ethan Forrest, JD ’12, on this project), seeks to use graphics to allow e-mail senders to describe how they want the recipient to treat their message. If it’s confidential and meant not to be forwarded, for instance, the message could be marked “[!]”; down the road, an e-mail software program might alert the recipient with several warnings if he or she attempted to re-forward an e-mail marked with the “[!]” tag.

Regulating privacy has an added complication because, unlike other areas of government oversight, some of the biggest threats to privacy come “not from private actors but from the government,” says Lemley.

Cate worries that the government keeps capturing and centralizing more data—often without even knowing why. Though frequently justified in news accounts as a counterterror measure, such an approach is misguided, he thinks. “[In 9/11], the problem was that we couldn’t connect the dots,” he says. “It’s not clear that more dots would have helped.”

Rotenberg’s EPIC has been heavily involved in the debate over TSA’s body-scanning machines. The group filed a lawsuit to try to stop the machines and has been very successful in producing relevant information through Freedom of Information Act requests and litigation.

But even as we grapple with problems of privacy intrusions both by the government and by Internet marketers, a bigger privacy dilemma may loom on the horizon, says Jonathan Zittrain, who teaches a course on Internet law every winter at the law school, with half of the participants hailing from Harvard and the other half from Stanford.

“Just as we’re collectively starting to wrap our minds around the very real privacy issues from the past ten years, we’re seeing a whole new breed of privacy issues in which the challenge isn’t intrusion by government or business, but by individual friends and strangers as they record and share their own lives and our parts in them,” says Zittrain. Once you combine technologies like social networking, facial recognition, posting photos online, and geolocation, it’s as if our whole lives are being streamed online.” These technologies may find troubling applications in the next few years: For instance, it may become trivially easy to use computers to generate lists of people entering a particular health clinic.

“It will be tough to figure out how to adapt the lessons we’ve learned, grappling with government and businesses, when the potentially privacy-compromising parties are people on the street,” he says. “We’ve met the enemy and it is us.” SL

3 Responses to “Your Privacy At Risk”

Comments are closed.

Marc Rotenberg

To the Editor,

It was nice to see the Stanford Lawyer raise the question of

Internet Privacy in the Fall 2011 issue (“Your Privacy at

Risk: Hidden Dangers of Life on the Internet: Can Laws

Protect Us?”)

But it was also somewhat disappointing to see how the

article ultimately answered the question asked, which

was somewhere between “this stuff is too difficult to

legislate” and “it’s actually our fault.” (Particularly silly

is the argument that because some government conduct

violates privacy, the government should not regulate

privacy. Under that theory, environmental regulation

would be improper because the government also

generates pollution.)

Fortunately, the courts, the Congress, international

organizations, and just about everyone else is

working to update law to respond to new challenges

brought about by changes in business practices

and government investigative techniques.

To provide just a few recent examples: the Federal Trade

Commission reached important settlements with Facebook

and Google concerning their business practices, the US

Supreme Court held that the warrantless use of a GPS

tracking device is impermissible, the European Commission

set out a comprehensive proposal to update the EU Data

Protection Directive, and the Congress is considering

legislation on a wide range of topics from locational privacy

to data breach notification and limiting the use of airport

body scanners.

We can draw a particularly relevant lesson from the recent

decision of the Court in the US v. Jones. There may be a

disagreement as to how best the law should protect privacy,

but there is no disagreement as to the need to protect privacy.

Best regards,

Marc Rotenberg, Stanford Law JD ’87

Washington, DC

Doc Hollidaye

“Thompson says he’s worried about what he calls the “Facebook over-sharing generation,” which is only beginning to understand the consequences of sharing things online, things that may seem funny at the time but disastrous in a few years—and that may be impossible to ever really take back.”

My belief is that a public warning should be issued to people about the risk they take in using Facebook and other social networking sites online. We have groups of criminals that have very deep pockets. They can hire on a criminal network to use surveillance in communities, steal online information and blatantly putting personal information out there is just really stupid.

Something as simple as leaving your cellphone unattended can give way to information being stolen and used. It’s simple to get tracking technologies that are used on phones, cars etc. What we also fail to realize is that some of these devices are not sold here in this country. It is technologies coming from other countries and may be considered illegal here. So how does our government track what they haven’t yet seen or had experience with?

Marc wrote above:

“Fortunately, the courts, the Congress, international

organizations, and just about everyone else is

working to update law to respond to new challenges

brought about by changes in business practices

and government investigative techniques.”

Yes indeed they are way behind and it is my hope that they will be able to speed things up. Trouble is there may be more communities than we think that have a stranglehold on them by large groups of organized crime/factions operating in this way. When you get groups that start using sophisticated surveillance they can then monitor communities in order to protect their interest and use complex methods to “shut everyone up”. This is not the type of America that we want to build. Believe me, and the sooner our laws catch up and our local authorities catch up the better off we will all be.

It is unfortunate that technology has brought about great treasures but it has also brought about better networking for criminal organizations at the expense of our citizens freedoms and quality of life.

Doc Hollidaye

“In the past you couldn’t reach an audience with the click of a mouse,” she says. “Now the damage from exposure of a private thing is effectively worldwide. Even if you win your suit, there’s no real ‘unringing’ the bell. Your ability to start fresh is so much more limited.”

Sounds to me like this is opening up a pool of litigation and damages to be sought and had by the victims.